原贴出自 Abhishek Dhule 在 Kaggle上的 “Implementing Simple Linear Regression from scratch”

Import library

1 | |

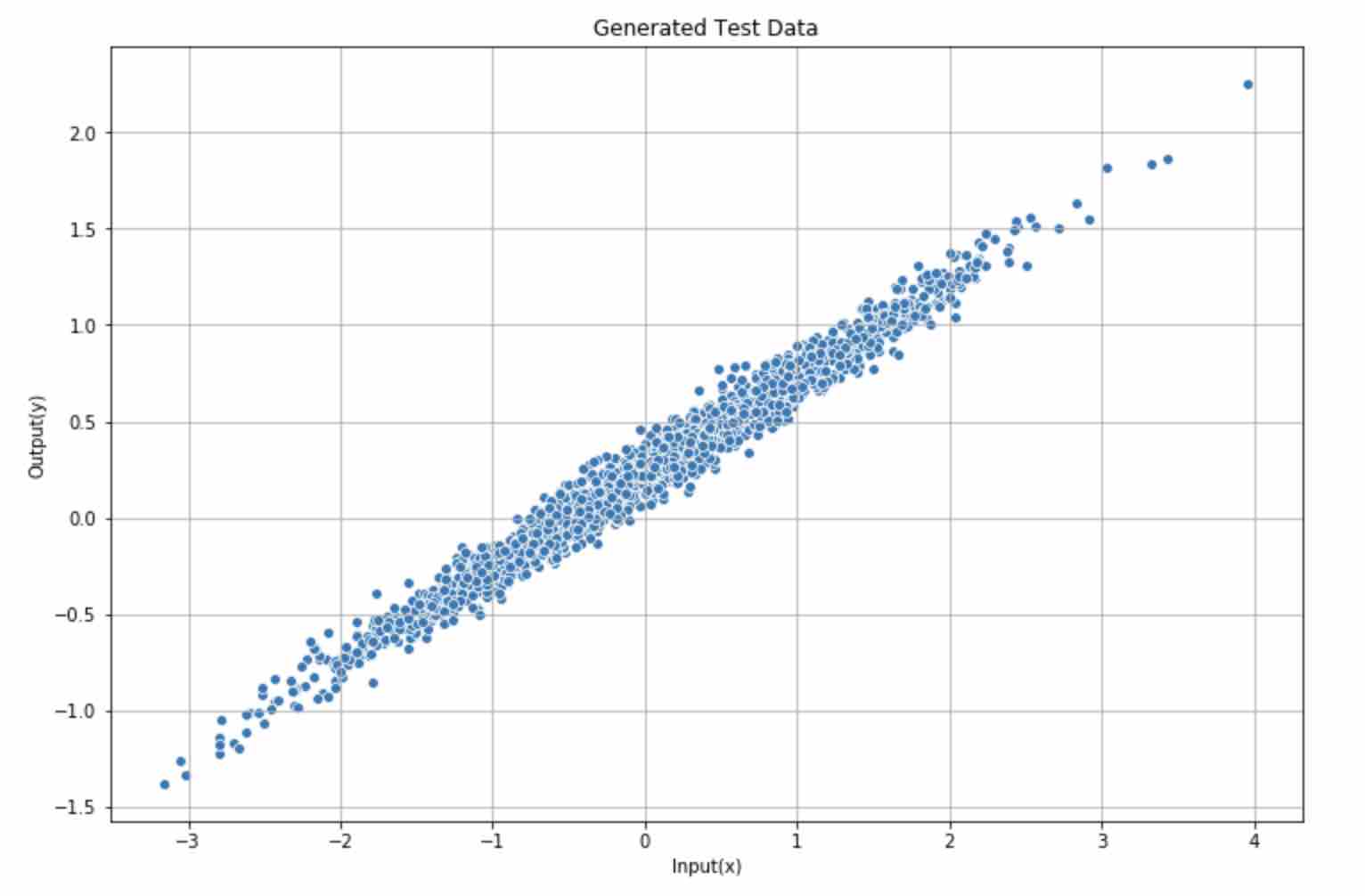

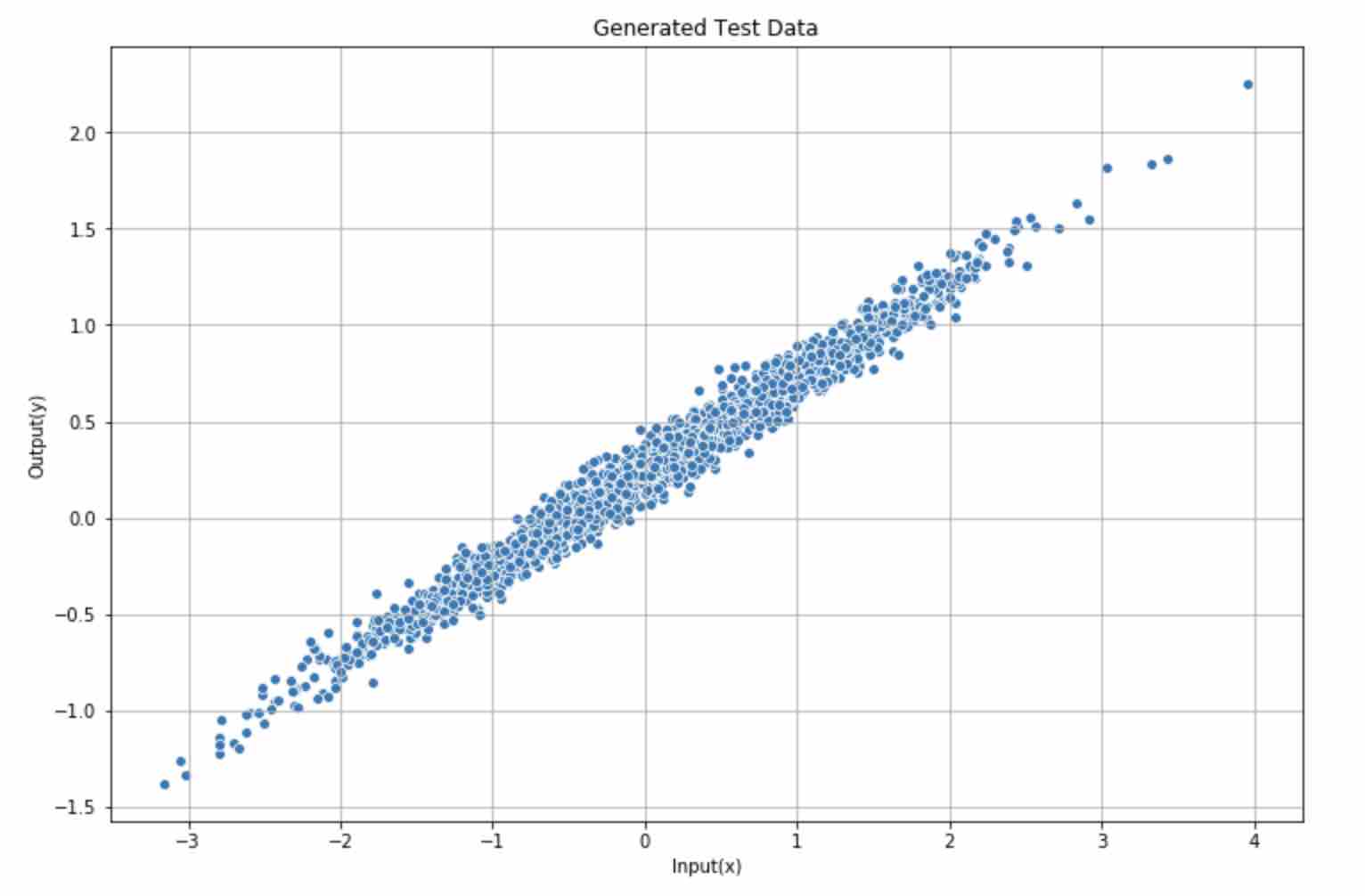

Generate test data

1 | |

Plot generated data

1 | |

Define linear regression model

1 | |

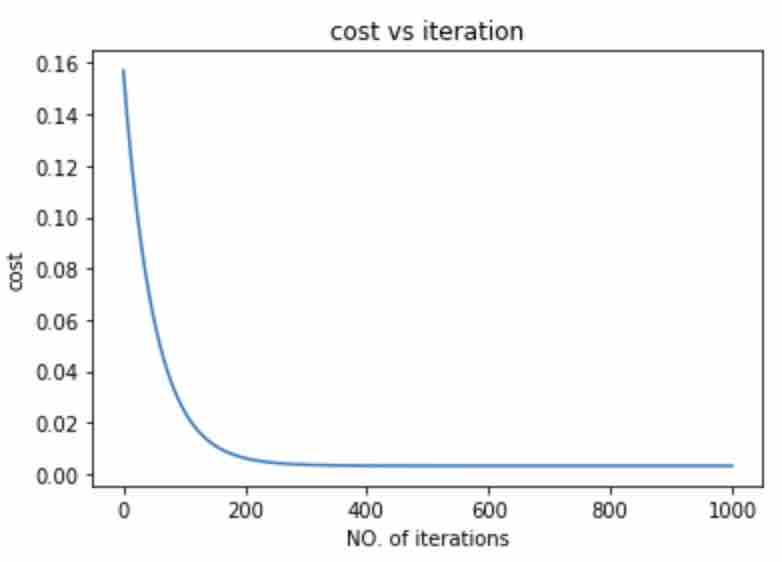

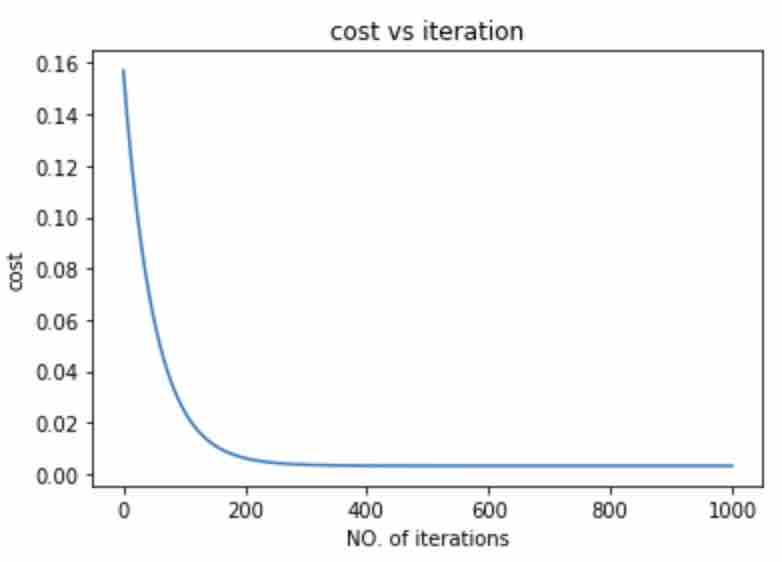

Run model

1 | |

1 | |

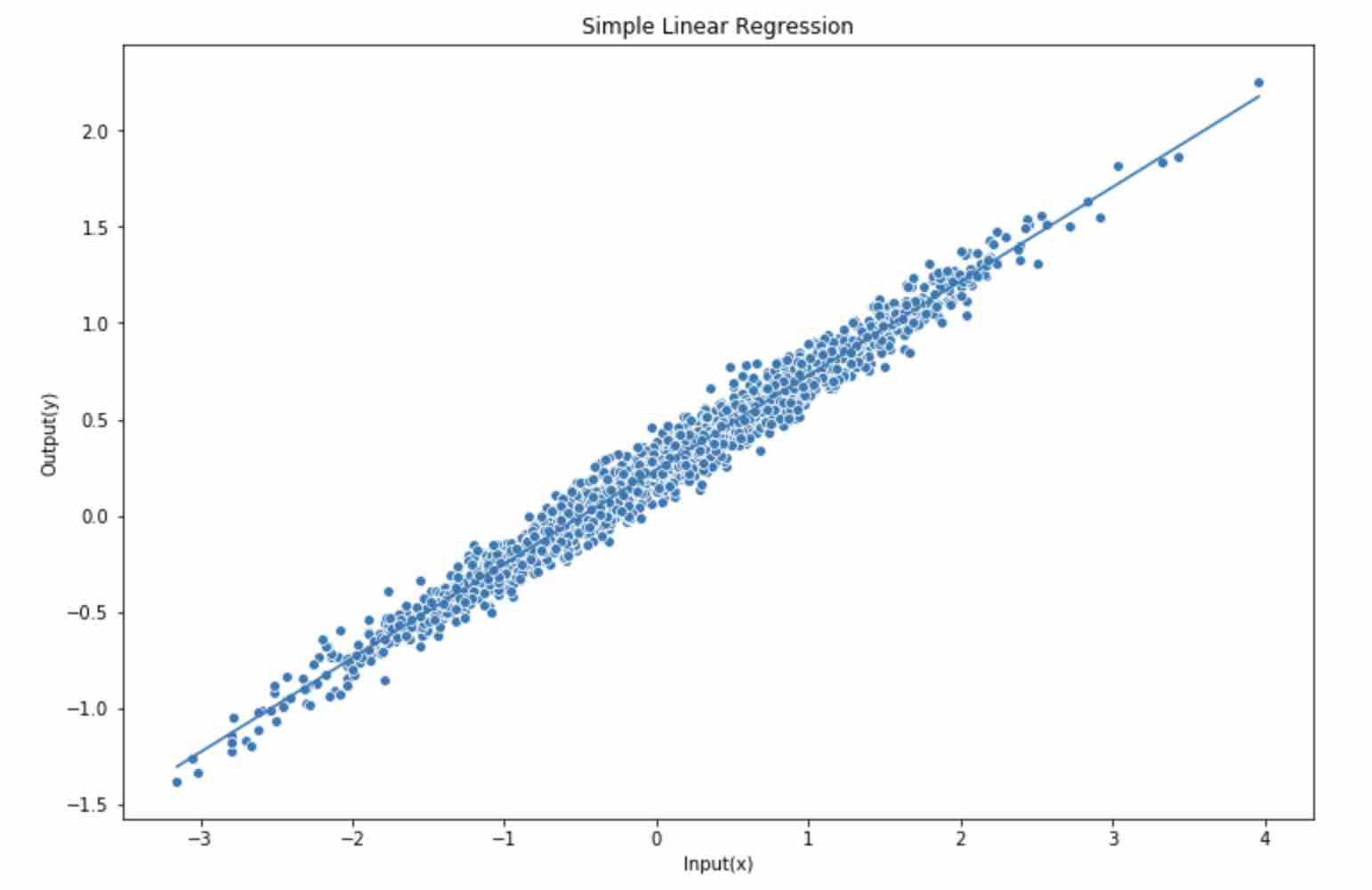

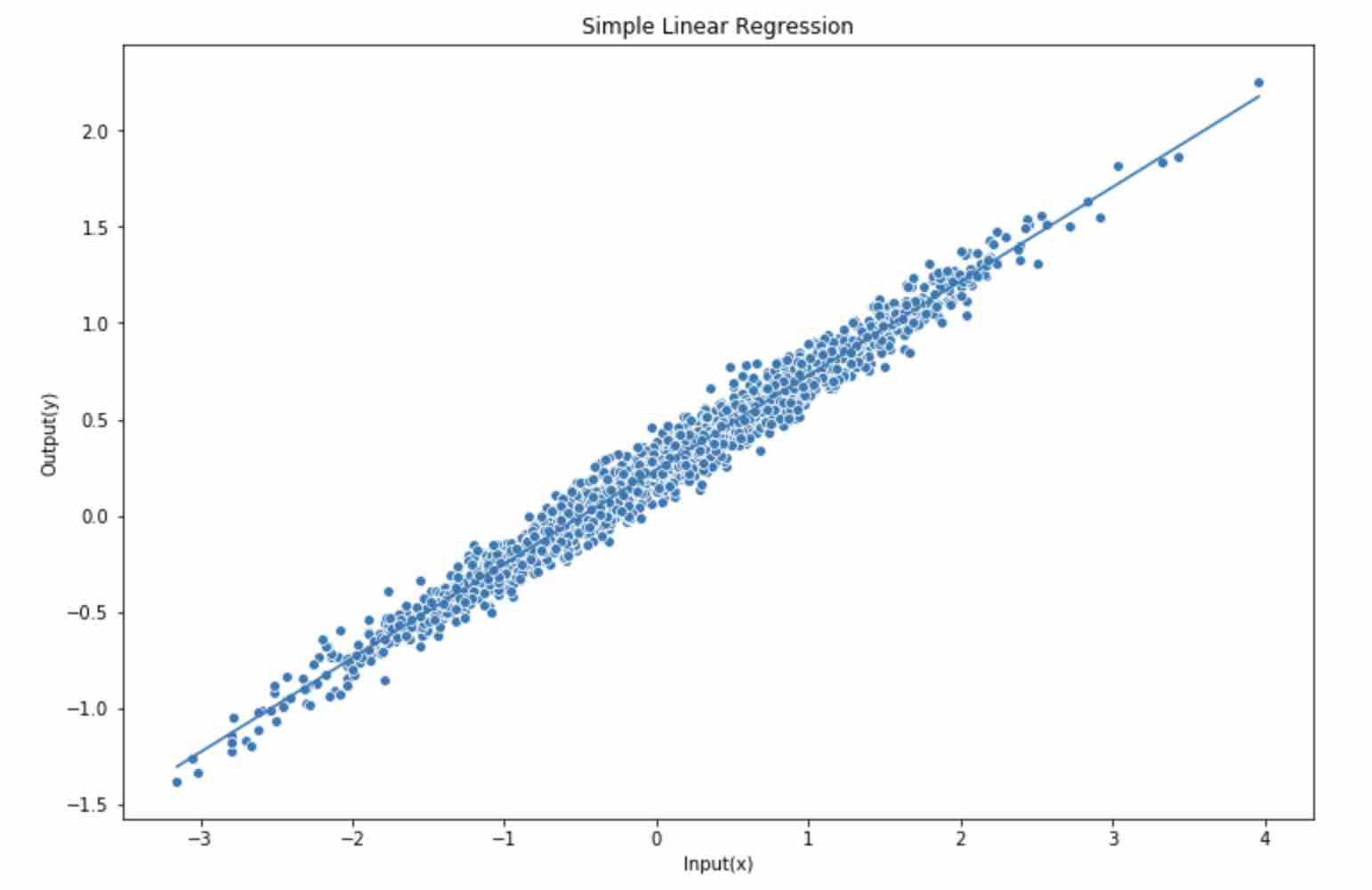

Plot predicted linear function

1 | |

原贴出自 Abhishek Dhule 在 Kaggle上的 “Implementing Simple Linear Regression from scratch”

1 | |

1 | |

1 | |

1 | |

1 | |

1 | |

1 | |